Abstract

Purpose

Artificial intelligence (AI) is a set of systems or combinations of algorithms, which mimic human intelligence. ChatGPT is software with artificial intelligence which was recently developed by OpenAI. One of its potential uses could be to consult the information about pathologies and treatments. Our objective was to assess the quality of the information provided by AI like ChatGPT and establish if it is a secure source of information for patients.

Methods

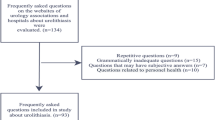

Questions about bladder cancer, prostate cancer, renal cancer, benign prostatic hypertrophy (BPH), and urinary stones were queried through ChatGPT 4.0. Two urologists analysed the responses provided by ChatGPT using DISCERN questionary and a brief instrument for evaluating the quality of informed consent documents.

Results

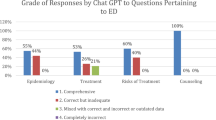

The overall information provided in all pathologies was well-balanced. In each pathology was explained its anatomical location, affected population and a description of the symptoms. It concluded with the established risk factors and possible treatment. All treatment answers had a moderate quality score with DISCERN (3 of 5 points). The answers about surgical options contain the recovery time, type of anaesthesia, and potential complications. After analysing all the responses related to each disease, all pathologies except BPH achieved a DISCERN score of 4.

Conclusions

ChatGPT information should be used with caution since the chatbot does not disclose the sources of information and may contain bias even with simple questions related to the basics of urologic diseases.

Similar content being viewed by others

Data sharing

The questions and answers of ChatGPT can be consulted in Open Science Framework repository (https://doi.org/10.17605/OSF.IO/8UNQV). The correspondence author will provide data from valid questionarries upon reasoned request.

References

OpenAI Help Center ChatGPT general FAQ. https://help.openai.com/en/articles/6783457-chatgpt-general-faq. Accessed 9 Mar 2023

Fraser H, Coiera E, Wong D (2018) Safety of patient-facing digital symptom checkers. Lancet 392(10161):2263–2264. https://doi.org/10.1016/S0140-6736(18)32819-8

Loeb S, Sengupta S, Butaney M et al (2019) Dissemination of misinformative and biased information about prostate cancer on YouTube. Eur Urol 75(4):564–567. https://doi.org/10.1016/j.eururo.2018.10.056

Loeb S, Reines K, Abu-Salha Y et al (2021) Quality of bladder cancer information on YouTube. Eur Urol 79(1):56–59. https://doi.org/10.1016/j.eururo.2020.09.014

Betschart P, Pratsinis M, Müllhaupt G et al (2020) Information on surgical treatment of benign prostatic hyperplasia on YouTube is highly biased and misleading. BJU Int 125(4):595–601. https://doi.org/10.1111/bju.14971

Biswas SS (2023) Role of ChatGPT in Public Health. Ann Biomed Eng. https://doi.org/10.1007/s10439-023-03172-7. (published online ahead of print, 2023 Mar 15)

Baclic O, Tunis M, Young K, Doan C, Swerdfeger H, Schonfeld J (2020) Challenges and opportunities for public health made possible by advances in natural language processing. Can Commun Dis Rep 46(6):161–168. https://doi.org/10.14745/ccdr.v46i06a02

Millenson ML, Baldwin JL, Zipperer L, Singh H (2018) Beyond Dr Google: the evidence on consumer-facing digital tools for diagnosis. Diagnosis (Berl) 5(3):95–105. https://doi.org/10.1515/dx-2018-0009

Szczesniewski JJ, Tellez Fouz C, Ramos Alba A, Garcia Tello A, Diaz Goizueta FJ, Llanes Gonzalez L (2023) Answers of ChatGPT to questions about urologic diseases. OSF (Database). https://doi.org/10.17605/OSF.IO/8UNQV

Charnock D, Shepperd S, Needham G, Gann R (1999) DISCERN: an instrument for judging the quality of written consumer health information on treatment choices. J Epidemiol Community Health 53(2):105–111. https://doi.org/10.1136/jech.53.2.105

Spatz ES, Suter LG, George E et al (2020) An instrument for assessing the quality of informed consent documents for elective procedures: development and testing. BMJ Open 10(5):e033297. https://doi.org/10.1136/bmjopen-2019-033297

Rivas JG, Socarrás MR, Blanco LT (2016) Social media in urology: opportunities, applications, appropriate use and new horizons. Cent Eur J Urol 69(3):293–298. https://doi.org/10.5173/ceju.2016.848

Haug CJ, Drazen JM (2023) Artificial Intelligence and machine learning in clinical medicine. N Engl J Med 388(13):1201–1208. https://doi.org/10.1056/NEJMra2302038

Lee P, Bubeck S, Petro J (2023) Benefits, limits, and risks of GPT-4 as an AI Chatbot for medicine. N Engl J Med 388(13):1233–1239. https://doi.org/10.1056/NEJMsr2214184

Sng GGR, Tung JYM, Lim DYZ, Bee YM (2023) Potential and pitfalls of ChatGPT and natural-language artificial intelligence models for diabetes education. Diabetes Care. https://doi.org/10.2337/dc23-0197. (published online ahead of print, 2023 Mar 15)

Fode M, Jensen CFS, Østergren PB (2021) How should the medical community respond to the low quality of medical information on social media? Eur Urol 79(1):60–61. https://doi.org/10.1016/j.eururo.2020.09.050

Singhal K, Azizi S, Tu T et al (2022) Large language models encode clinical knowledge. http://arxiv.org/abs/2212.13138. Accessed 30 Mar 2023

Whiles BB, Bird VG, Canales BK, DiBianco JM, Terry RS (2023) Caution! AI bot has entered the patient chat: ChatGPT has limitations in providing accurate urologic healthcare advice. Urology. https://doi.org/10.1016/j.urology.2023.07.010

Funding

All authors certify that they have no affiliations with or involvement in any organization or entity with any financial interest or non-financial interest in the subject matter or materials discussed in this manuscript.

Author information

Authors and Affiliations

Contributions

JJS: Project development, data collection and analysis, manuscript writing. CTF: data collection. ARA: data analysis, manuscript writing. AGT: Manuscript writing. FJDG: Manuscript writing. LLG: Project development, manuscript editing, final approval.

Corresponding author

Ethics declarations

Conflict of interest

The authors declare no conflict of interests.

Ethics approval and informed consent

No human patients were involved in the study. No need Ethics Committee was required.

Additional information

Publisher's Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Supplementary Information

Below is the link to the electronic supplementary material.

Rights and permissions

Springer Nature or its licensor (e.g. a society or other partner) holds exclusive rights to this article under a publishing agreement with the author(s) or other rightsholder(s); author self-archiving of the accepted manuscript version of this article is solely governed by the terms of such publishing agreement and applicable law.

About this article

Cite this article

Szczesniewski, J.J., Tellez Fouz, C., Ramos Alba, A. et al. ChatGPT and most frequent urological diseases: analysing the quality of information and potential risks for patients. World J Urol 41, 3149–3153 (2023). https://doi.org/10.1007/s00345-023-04563-0

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s00345-023-04563-0